Most AI groups deal with the incorrect issues. Right here’s a typical scene from my consulting work:

AI TEAM

Right here’s our agent structure—we’ve received RAG right here, a router there, and we’re utilizing this new framework for…ME

[Holding up my hand to pause the enthusiastic tech lead]

Are you able to present me the way you’re measuring if any of this really works?… Room goes quiet

This scene has performed out dozens of instances during the last two years. Groups make investments weeks constructing complicated AI techniques however can’t inform me if their modifications are serving to or hurting.

This isn’t stunning. With new instruments and frameworks rising weekly, it’s pure to deal with tangible issues we will management—which vector database to make use of, which LLM supplier to decide on, which agent framework to undertake. However after serving to 30+ corporations construct AI merchandise, I’ve found that the groups who succeed barely discuss instruments in any respect. As an alternative, they obsess over measurement and iteration.

On this submit, I’ll present you precisely how these profitable groups function. Whereas each scenario is exclusive, you’ll see patterns that apply no matter your area or workforce dimension. Let’s begin by inspecting the most typical mistake I see groups make—one which derails AI initiatives earlier than they even start.

The Most Frequent Mistake: Skipping Error Evaluation

The “instruments first” mindset is the most typical mistake in AI improvement. Groups get caught up in structure diagrams, frameworks, and dashboards whereas neglecting the method of truly understanding what’s working and what isn’t.

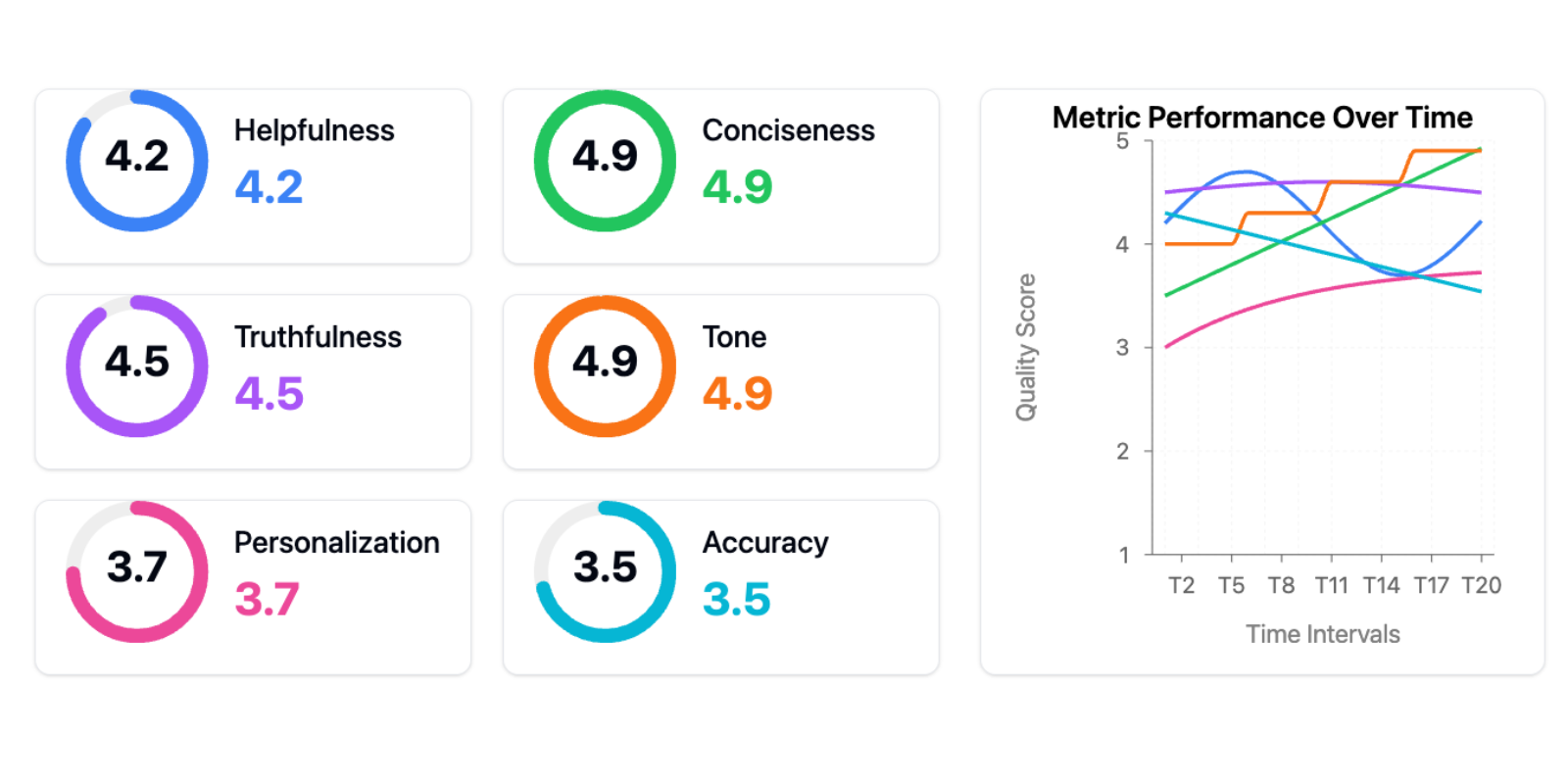

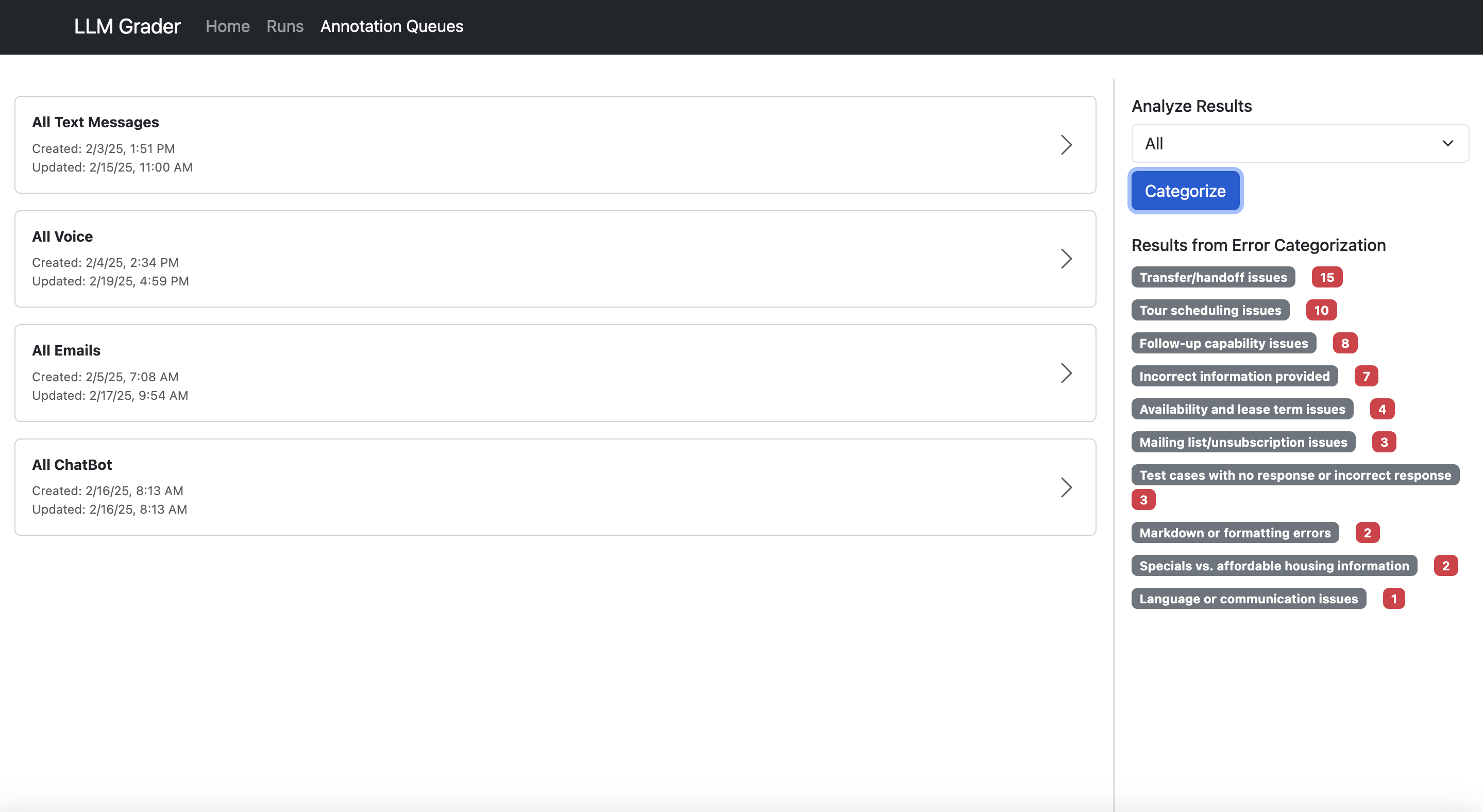

One shopper proudly confirmed me this analysis dashboard:

That is the “instruments lure”—the idea that adopting the appropriate instruments or frameworks (on this case, generic metrics) will resolve your AI issues. Generic metrics are worse than ineffective—they actively impede progress in two methods:

First, they create a false sense of measurement and progress. Groups suppose they’re data-driven as a result of they’ve dashboards, however they’re monitoring vainness metrics that don’t correlate with actual person issues. I’ve seen groups rejoice enhancing their “helpfulness rating” by 10% whereas their precise customers had been nonetheless scuffling with fundamental duties. It’s like optimizing your web site’s load time whereas your checkout course of is damaged—you’re getting higher on the incorrect factor.

Second, too many metrics fragment your consideration. As an alternative of specializing in the few metrics that matter to your particular use case, you’re making an attempt to optimize a number of dimensions concurrently. When every thing is essential, nothing is.

The choice? Error evaluation: the one most useful exercise in AI improvement and persistently the highest-ROI exercise. Let me present you what efficient error evaluation seems to be like in observe.

The Error Evaluation Course of

When Jacob, the founding father of Nurture Boss, wanted to enhance the corporate’s apartment-industry AI assistant, his workforce constructed a easy viewer to look at conversations between their AI and customers. Subsequent to every dialog was an area for open-ended notes about failure modes.

After annotating dozens of conversations, clear patterns emerged. Their AI was scuffling with date dealing with—failing 66% of the time when customers stated issues like “Let’s schedule a tour two weeks from now.”

As an alternative of reaching for brand new instruments, they:

- Checked out precise dialog logs

- Categorized the kinds of date-handling failures

- Constructed particular assessments to catch these points

- Measured enchancment on these metrics

The end result? Their date dealing with success price improved from 33% to 95%.

Right here’s Jacob explaining this course of himself:

Backside-Up Versus Prime-Down Evaluation

When figuring out error varieties, you may take both a “top-down” or “bottom-up” strategy.

The highest-down strategy begins with frequent metrics like “hallucination” or “toxicity” plus metrics distinctive to your activity. Whereas handy, it typically misses domain-specific points.

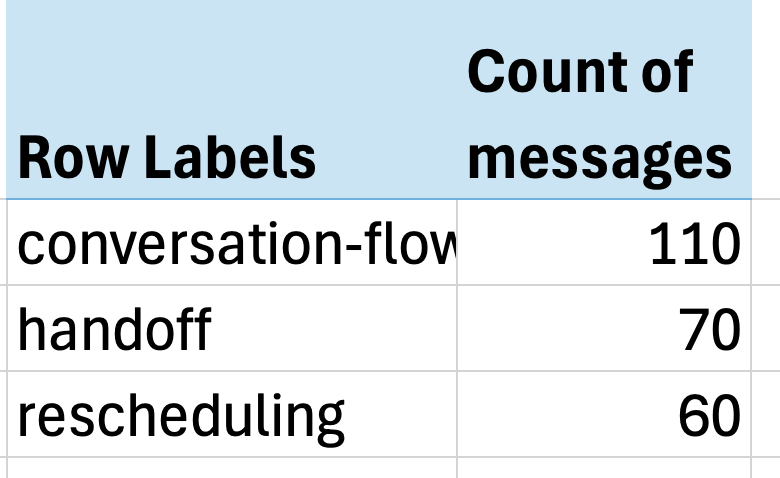

The simpler bottom-up strategy forces you to have a look at precise information and let metrics naturally emerge. At Nurture Boss, we began with a spreadsheet the place every row represented a dialog. We wrote open-ended notes on any undesired conduct. Then we used an LLM to construct a taxonomy of frequent failure modes. Lastly, we mapped every row to particular failure mode labels and counted the frequency of every concern.

The outcomes had been putting—simply three points accounted for over 60% of all issues:

- Dialog circulate points (lacking context, awkward responses)

- Handoff failures (not recognizing when to switch to people)

- Rescheduling issues (scuffling with date dealing with)

The influence was instant. Jacob’s workforce had uncovered so many actionable insights that they wanted a number of weeks simply to implement fixes for the issues we’d already discovered.

Should you’d wish to see error evaluation in motion, we recorded a stay walkthrough right here.

This brings us to an important query: How do you make it straightforward for groups to have a look at their information? The reply leads us to what I take into account a very powerful funding any AI workforce could make…

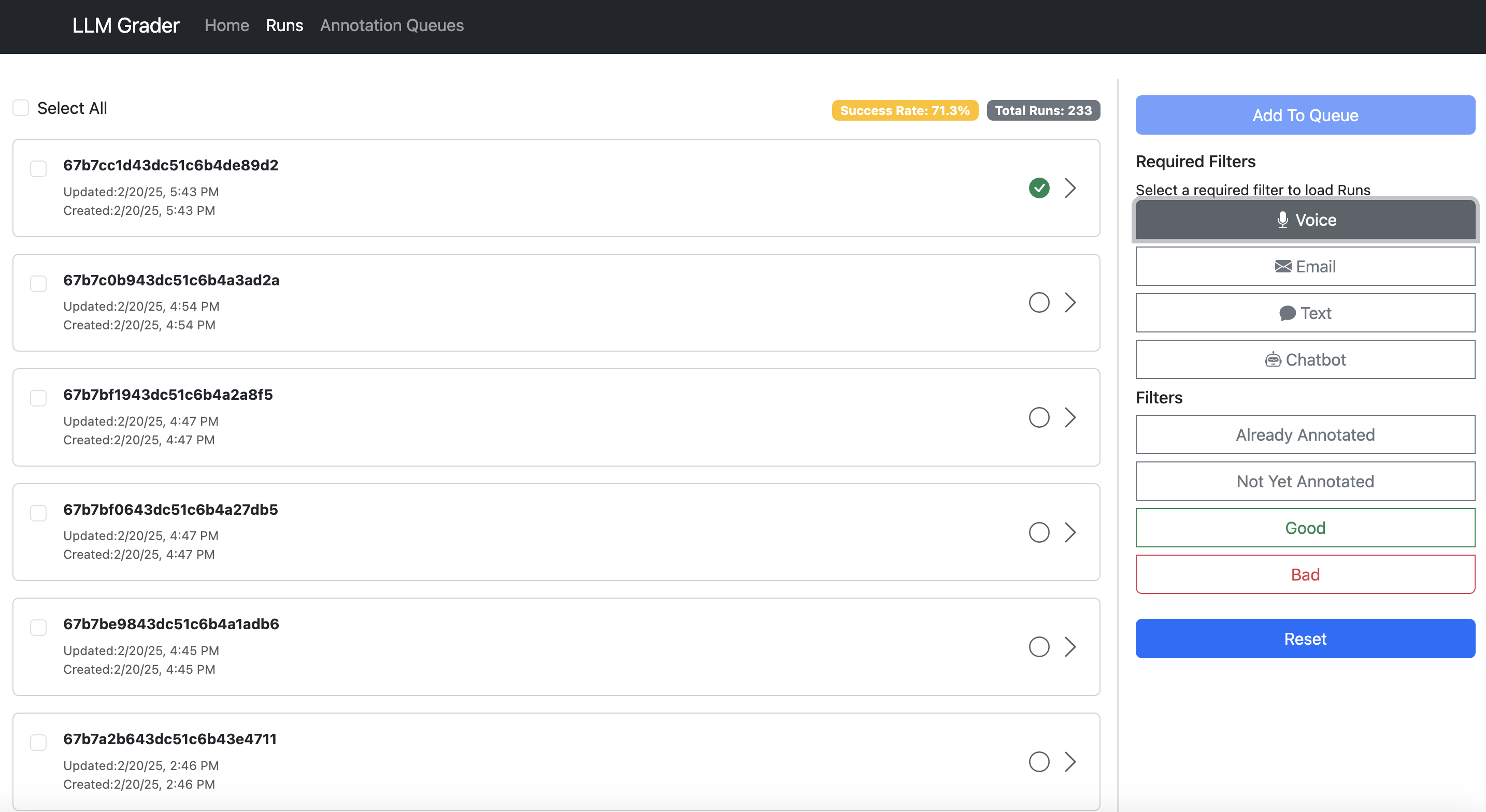

The Most Essential AI Funding: A Easy Knowledge Viewer

The one most impactful funding I’ve seen AI groups make isn’t a elaborate analysis dashboard—it’s constructing a personalized interface that lets anybody study what their AI is definitely doing. I emphasize personalized as a result of each area has distinctive wants that off-the-shelf instruments not often deal with. When reviewing residence leasing conversations, it’s essential see the total chat historical past and scheduling context. For actual property queries, you want the property particulars and supply paperwork proper there. Even small UX selections—like the place to position metadata or which filters to show—could make the distinction between a instrument individuals really use and one they keep away from.

I’ve watched groups wrestle with generic labeling interfaces, looking by means of a number of techniques simply to grasp a single interplay. The friction provides up: clicking by means of to totally different techniques to see context, copying error descriptions into separate monitoring sheets, switching between instruments to confirm data. This friction doesn’t simply gradual groups down—it actively discourages the form of systematic evaluation that catches delicate points.

Groups with thoughtfully designed information viewers iterate 10x quicker than these with out them. And right here’s the factor: These instruments might be in-built hours utilizing AI-assisted improvement (like Cursor or Loveable). The funding is minimal in comparison with the returns.

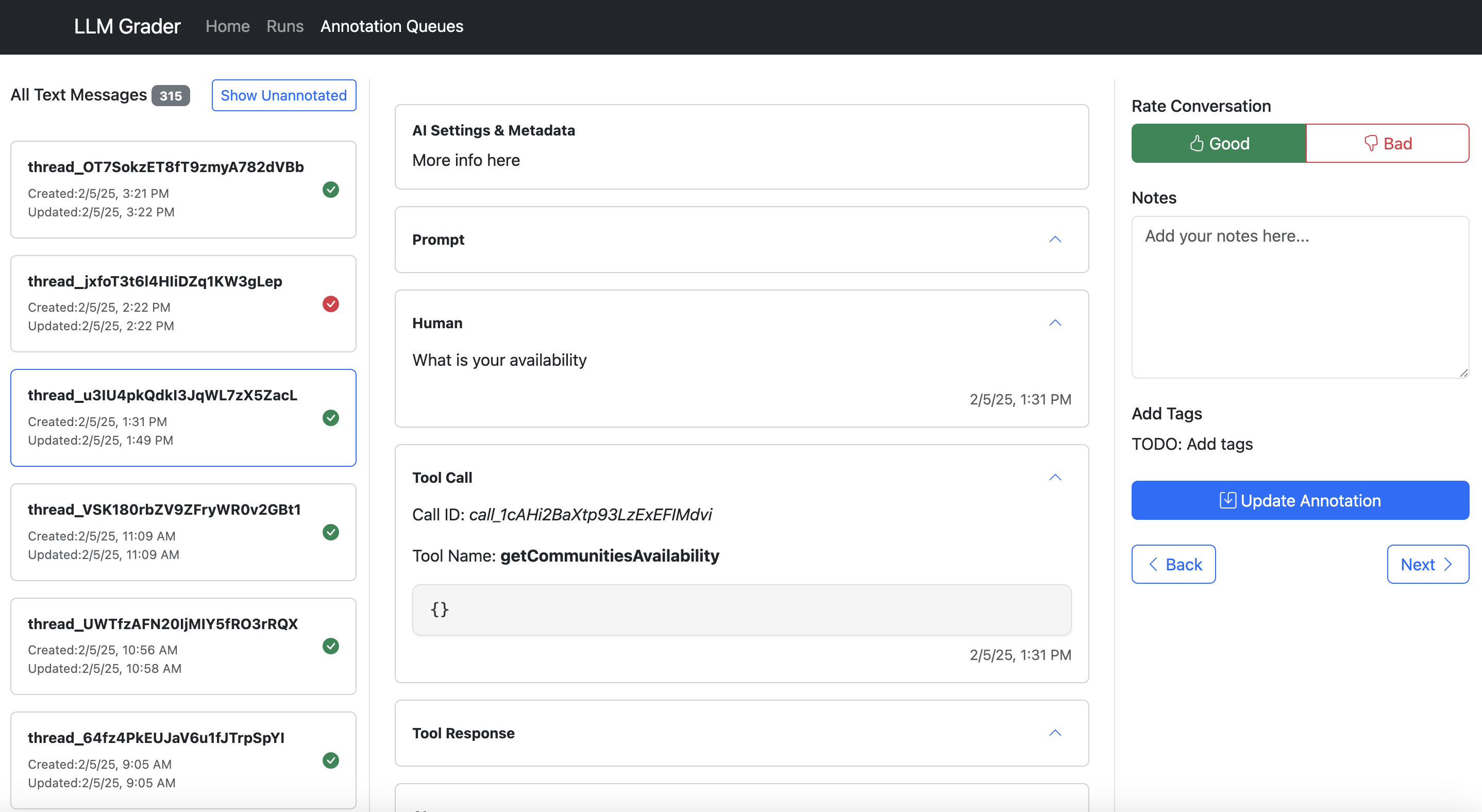

Let me present you what I imply. Right here’s the info viewer constructed for Nurture Boss (which I mentioned earlier):

Right here’s what makes an excellent information annotation instrument:

- Present all context in a single place. Don’t make customers hunt by means of totally different techniques to grasp what occurred.

- Make suggestions trivial to seize. One-click appropriate/incorrect buttons beat prolonged kinds.

- Seize open-ended suggestions. This allows you to seize nuanced points that don’t match right into a predefined taxonomy.

- Allow fast filtering and sorting. Groups want to simply dive into particular error varieties. Within the instance above, Nurture Boss can rapidly filter by the channel (voice, textual content, chat) or the particular property they wish to have a look at rapidly.

- Have hotkeys that enable customers to navigate between information examples and annotate with out clicking.

It doesn’t matter what internet frameworks you employ—use no matter you’re aware of. As a result of I’m a Python developer, my present favourite internet framework is FastHTML coupled with MonsterUI as a result of it permits me to outline the backend and frontend code in a single small Python file.

The secret is beginning someplace, even when it’s easy. I’ve discovered customized internet apps present the very best expertise, however when you’re simply starting, a spreadsheet is best than nothing. As your wants develop, you may evolve your instruments accordingly.

This brings us to a different counterintuitive lesson: The individuals finest positioned to enhance your AI system are sometimes those who know the least about AI.

Empower Area Specialists To Write Prompts

I just lately labored with an schooling startup constructing an interactive studying platform with LLMs. Their product supervisor, a studying design skilled, would create detailed PowerPoint decks explaining pedagogical ideas and instance dialogues. She’d current these to the engineering workforce, who would then translate her experience into prompts.

However right here’s the factor: Prompts are simply English. Having a studying skilled talk instructing ideas by means of PowerPoint just for engineers to translate that again into English prompts created pointless friction. Probably the most profitable groups flip this mannequin by giving area consultants instruments to jot down and iterate on prompts straight.

Construct Bridges, Not Gatekeepers

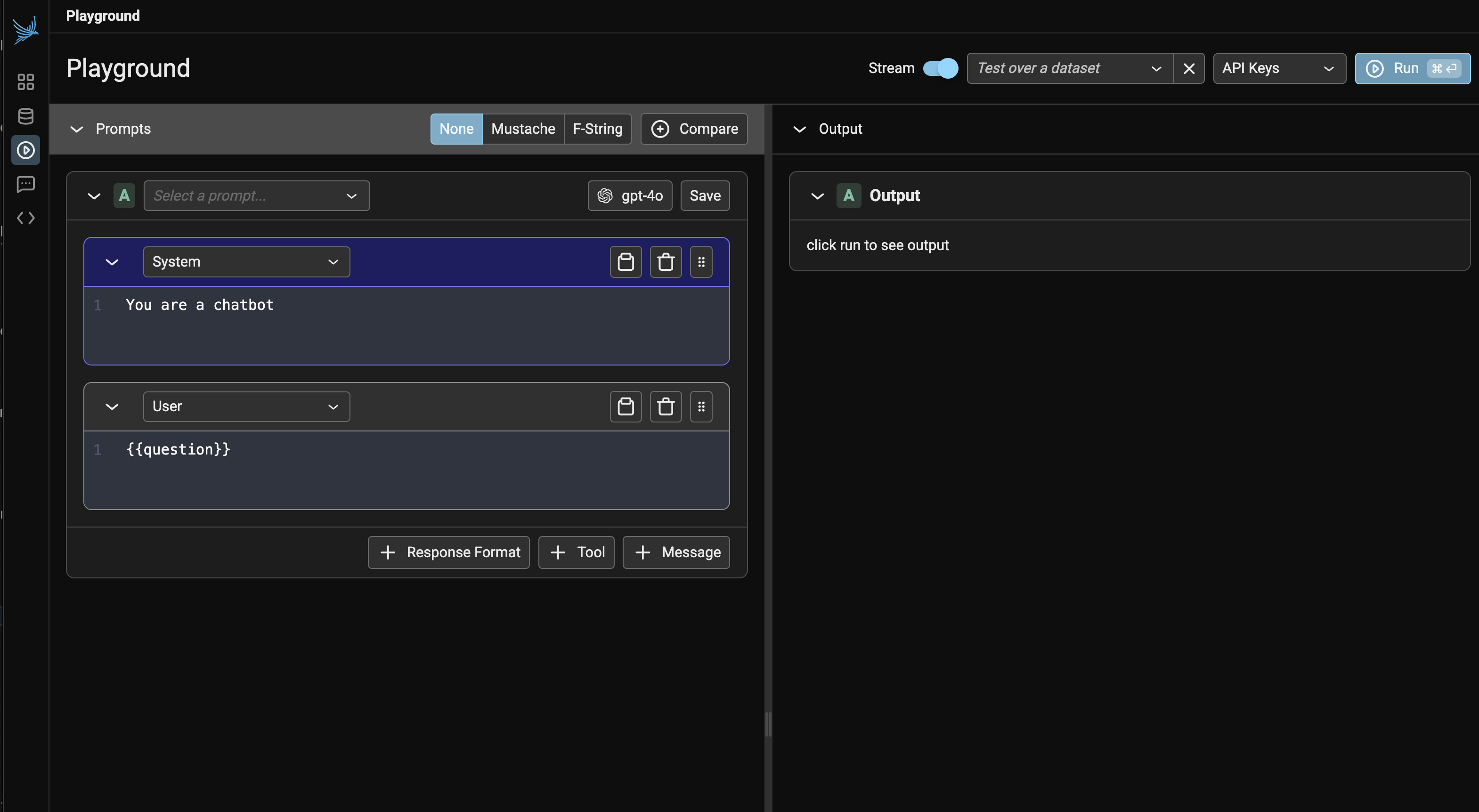

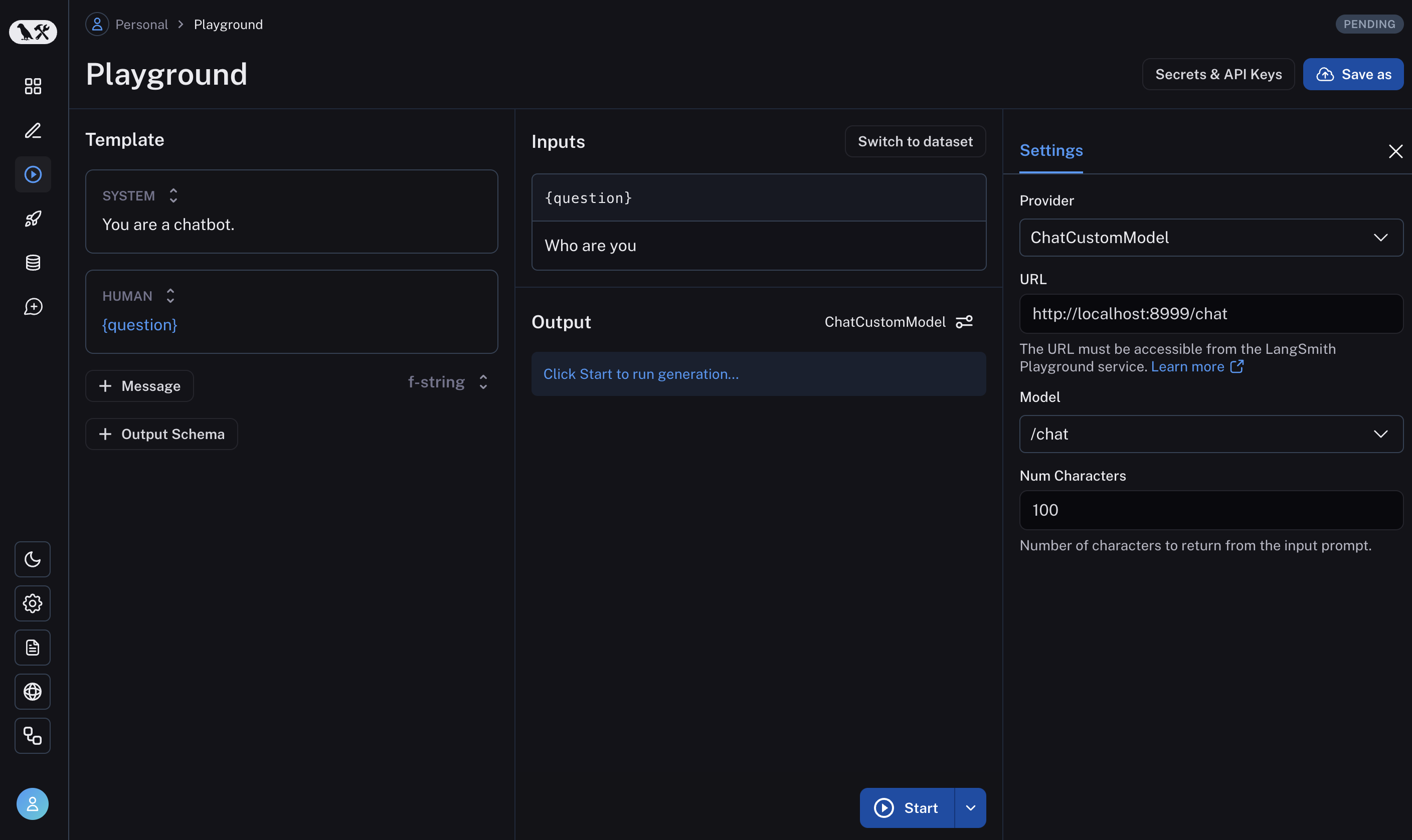

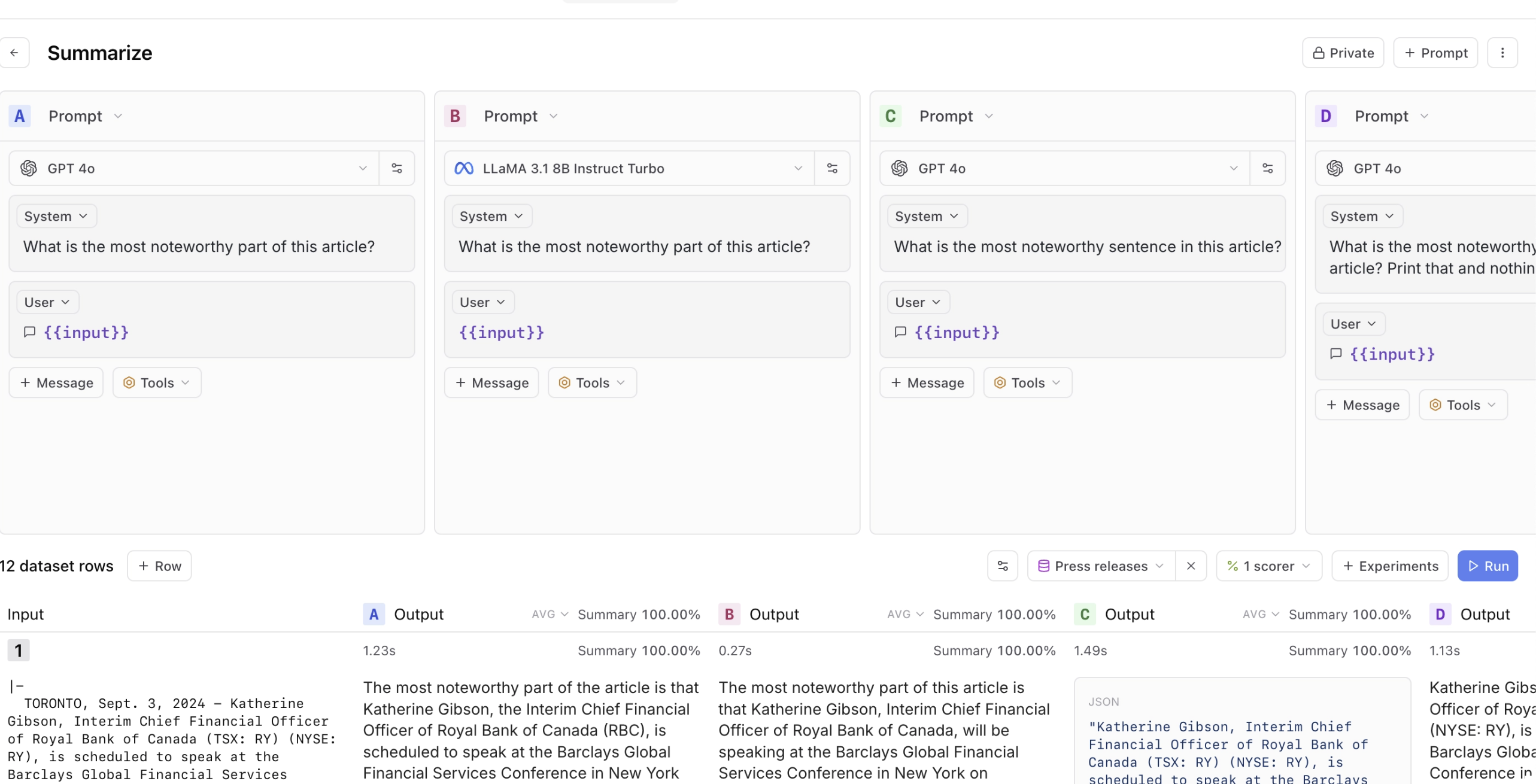

Immediate playgrounds are an ideal start line for this. Instruments like Arize, LangSmith, and Braintrust let groups rapidly take a look at totally different prompts, feed in instance datasets, and evaluate outcomes. Listed here are some screenshots of those instruments:

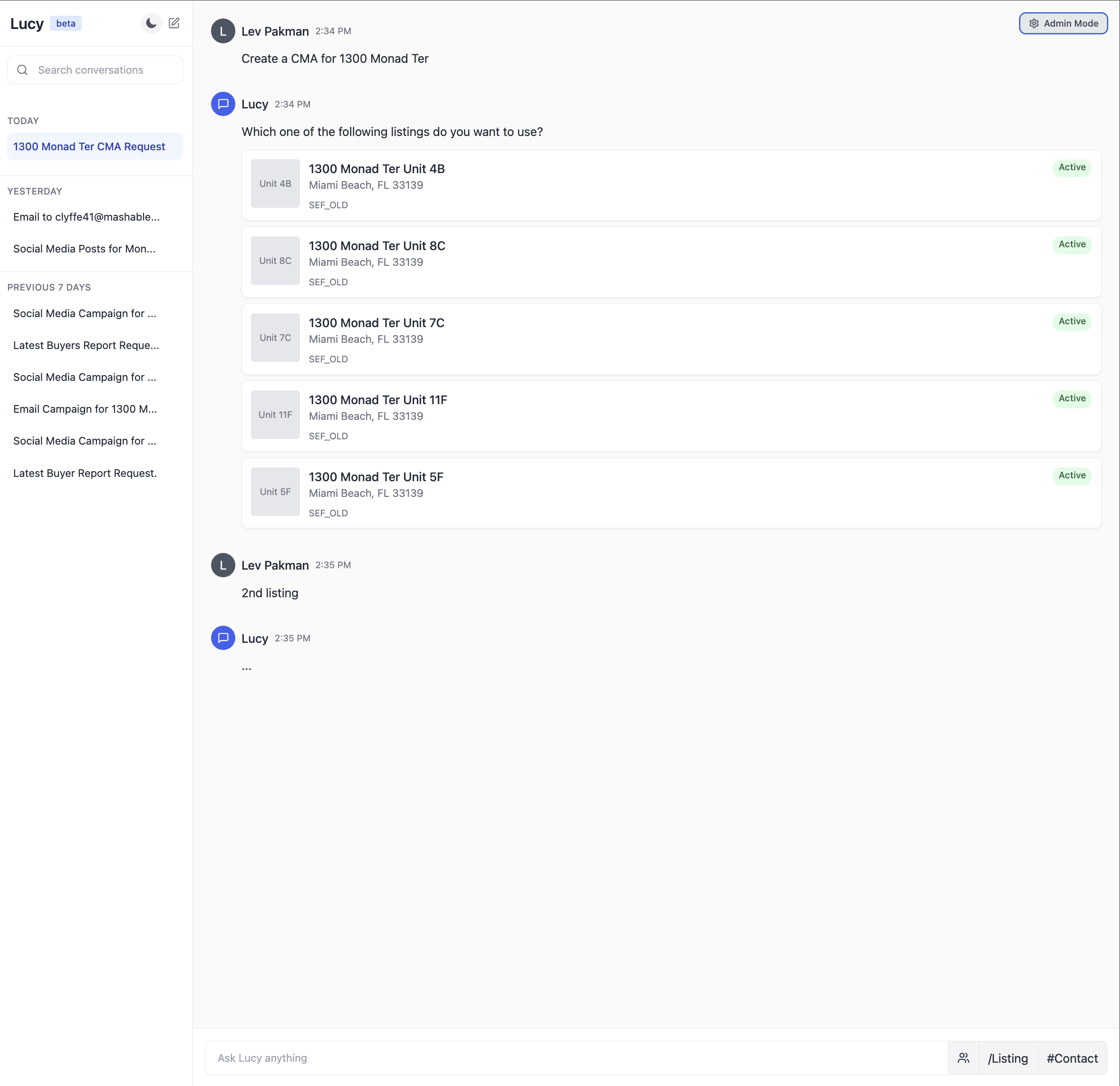

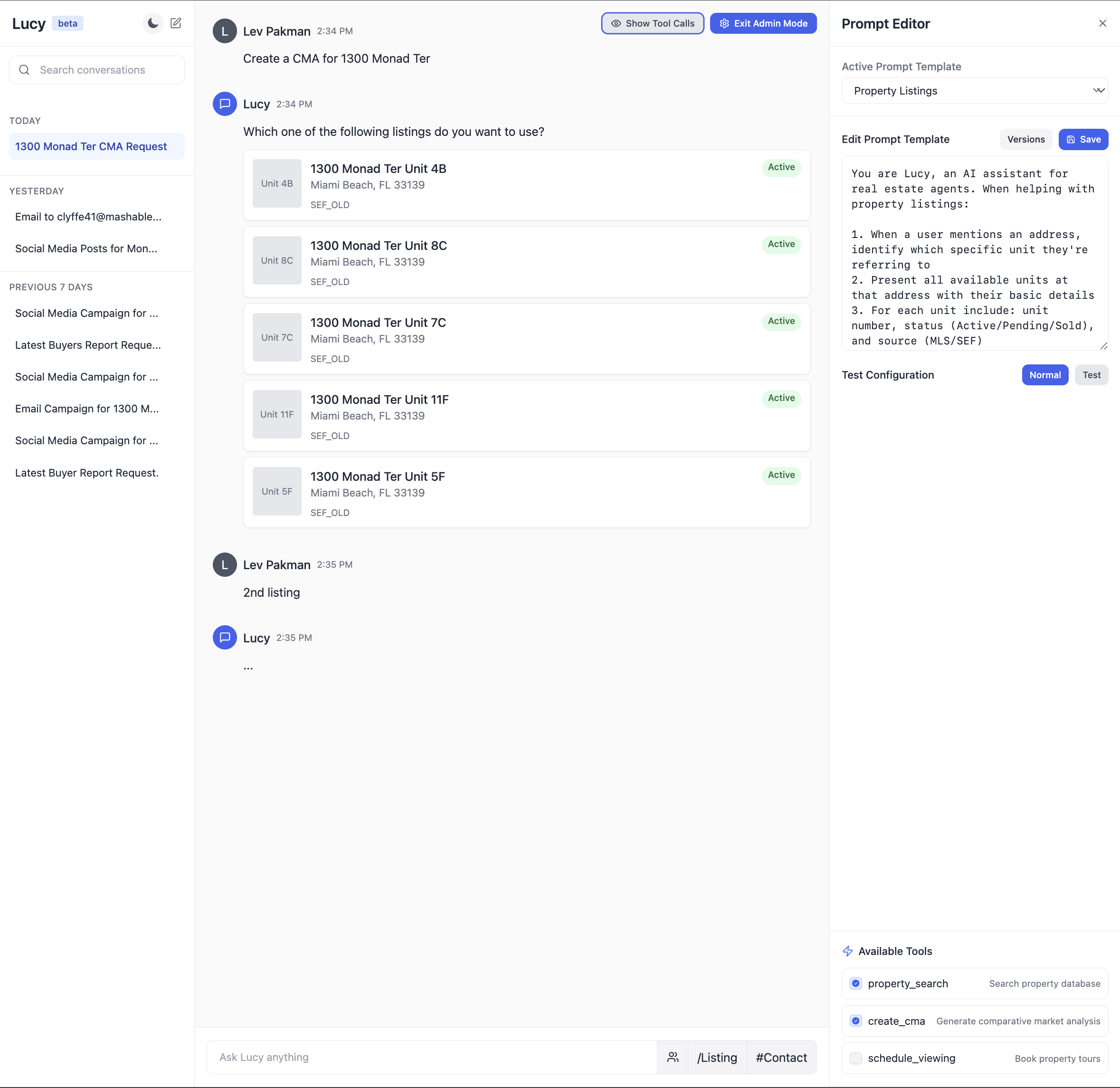

However there’s an important subsequent step that many groups miss: integrating immediate improvement into their software context. Most AI purposes aren’t simply prompts; they generally contain RAG techniques pulling out of your information base, agent orchestration coordinating a number of steps, and application-specific enterprise logic. The simplest groups I’ve labored with transcend stand-alone playgrounds. They construct what I name built-in immediate environments—primarily admin variations of their precise person interface that expose immediate enhancing.

Right here’s an illustration of what an built-in immediate atmosphere may seem like for an actual property AI assistant:

Ideas For Speaking With Area Specialists

There’s one other barrier that usually prevents area consultants from contributing successfully: pointless jargon. I used to be working with an schooling startup the place engineers, product managers, and studying specialists had been speaking previous one another in conferences. The engineers stored saying, “We’re going to construct an agent that does XYZ,” when actually the job to be accomplished was writing a immediate. This created a synthetic barrier—the educational specialists, who had been the precise area consultants, felt like they couldn’t contribute as a result of they didn’t perceive “brokers.”

This occurs in all places. I’ve seen it with attorneys at authorized tech corporations, psychologists at psychological well being startups, and medical doctors at healthcare corporations. The magic of LLMs is that they make AI accessible by means of pure language, however we regularly destroy that benefit by wrapping every thing in technical terminology.

Right here’s a easy instance of learn how to translate frequent AI jargon:

| As an alternative of claiming… | Say… |

| “We’re implementing a RAG strategy.” | “We’re ensuring the mannequin has the appropriate context to reply questions.” |

| “We have to stop immediate injection.” | “We want to verify customers can’t trick the AI into ignoring our guidelines.” |

| “Our mannequin suffers from hallucination points.” | “Typically the AI makes issues up, so we have to verify its solutions.” |

This doesn’t imply dumbing issues down—it means being exact about what you’re really doing. Whenever you say, “We’re constructing an agent,” what particular functionality are you including? Is it perform calling? Software use? Or only a higher immediate? Being particular helps everybody perceive what’s really occurring.

There’s nuance right here. Technical terminology exists for a cause: it gives precision when speaking with different technical stakeholders. The secret is adapting your language to your viewers.

The problem many groups increase at this level is “This all sounds nice, however what if we don’t have any information but? How can we have a look at examples or iterate on prompts after we’re simply beginning out?” That’s what we’ll discuss subsequent.

Bootstrapping Your AI With Artificial Knowledge Is Efficient (Even With Zero Customers)

Probably the most frequent roadblocks I hear from groups is “We are able to’t do correct analysis as a result of we don’t have sufficient actual person information but.” This creates a chicken-and-egg drawback—you want information to enhance your AI, however you want an honest AI to get customers who generate that information.

Thankfully, there’s an answer that works surprisingly properly: artificial information. LLMs can generate practical take a look at circumstances that cowl the vary of eventualities your AI will encounter.

As I wrote in my LLM-as-a-Decide weblog submit, artificial information might be remarkably efficient for analysis. Bryan Bischof, the previous head of AI at Hex, put it completely:

LLMs are surprisingly good at producing glorious – and various – examples of person prompts. This may be related for powering software options, and sneakily, for constructing Evals. If this sounds a bit just like the Massive Language Snake is consuming its tail, I used to be simply as shocked as you! All I can say is: it really works, ship it.

A Framework for Producing Real looking Check Knowledge

The important thing to efficient artificial information is selecting the best dimensions to check. Whereas these dimensions will range primarily based in your particular wants, I discover it useful to consider three broad classes:

- Options: What capabilities does your AI have to assist?

- Eventualities: What conditions will it encounter?

- Person personas: Who can be utilizing it and the way?

These aren’t the one dimensions you may care about—you may additionally wish to take a look at totally different tones of voice, ranges of technical sophistication, and even totally different locales and languages. The essential factor is figuring out dimensions that matter to your particular use case.

For an actual property CRM AI assistant I labored on with Rechat, we outlined these dimensions like this:

However having these dimensions outlined is just half the battle. The true problem is making certain your artificial information really triggers the eventualities you wish to take a look at. This requires two issues:

- A take a look at database with sufficient selection to assist your eventualities

- A strategy to confirm that generated queries really set off supposed eventualities

For Rechat, we maintained a take a look at database of listings that we knew would set off totally different edge circumstances. Some groups choose to make use of an anonymized copy of manufacturing information, however both manner, it’s essential guarantee your take a look at information has sufficient selection to train the eventualities you care about.

Right here’s an instance of how we’d use these dimensions with actual information to generate take a look at circumstances for the property search characteristic (that is simply pseudo code, and really illustrative):

def generate_search_query(situation, persona, listing_db):

"""Generate a practical person question about listings"""

# Pull actual itemizing information to floor the era

sample_listings = listing_db.get_sample_listings(

price_range=persona.price_range,

location=persona.preferred_areas

)

# Confirm we now have listings that can set off our situation

if situation == "multiple_matches" and len(sample_listings) 0:

increase ValueError("Discovered matches when testing no-match situation")

immediate = f"""

You might be an skilled actual property agent who's looking for listings. You might be given a buyer kind and a situation.

Your job is to generate a pure language question you'd use to go looking these listings.

Context:

- Buyer kind: {persona.description}

- State of affairs: {situation}

Use these precise listings as reference:

{format_listings(sample_listings)}

The question ought to mirror the shopper kind and the situation.

Instance question: Discover properties within the 75019 zip code, 3 bedrooms, 2 bogs, worth vary $750k - $1M for an investor.

"""

return generate_with_llm(immediate)

This produced practical queries like:

| Function | State of affairs | Persona | Generated Question |

|---|---|---|---|

| property search | a number of matches | first_time_buyer | “Searching for 3-bedroom properties below $500k within the Riverside space. Would love one thing near parks since we now have younger youngsters.” |

| market evaluation | no matches | investor | “Want comps for 123 Oak St. Particularly interested by rental yield comparability with comparable properties in a 2-mile radius.” |

The important thing to helpful artificial information is grounding it in actual system constraints. For the real-estate AI assistant, this implies:

- Utilizing actual itemizing IDs and addresses from their database

- Incorporating precise agent schedules and availability home windows

- Respecting enterprise guidelines like exhibiting restrictions and see intervals

- Together with market-specific particulars like HOA necessities or native rules

We then feed these take a look at circumstances by means of Lucy (now a part of Capability) and log the interactions. This provides us a wealthy dataset to investigate, exhibiting precisely how the AI handles totally different conditions with actual system constraints. This strategy helped us repair points earlier than they affected actual customers.

Typically you don’t have entry to a manufacturing database, particularly for brand new merchandise. In these circumstances, use LLMs to generate each take a look at queries and the underlying take a look at information. For an actual property AI assistant, this may imply creating artificial property listings with practical attributes—costs that match market ranges, legitimate addresses with actual road names, and facilities applicable for every property kind. The secret is grounding artificial information in real-world constraints to make it helpful for testing. The specifics of producing sturdy artificial databases are past the scope of this submit.

Pointers for Utilizing Artificial Knowledge

When producing artificial information, comply with these key ideas to make sure it’s efficient:

- Diversify your dataset: Create examples that cowl a variety of options, eventualities, and personas. As I wrote in my LLM-as-a-Decide submit, this variety helps you determine edge circumstances and failure modes you won’t anticipate in any other case.

- Generate person inputs, not outputs: Use LLMs to generate practical person queries or inputs, not the anticipated AI responses. This prevents your artificial information from inheriting the biases or limitations of the producing mannequin.

- Incorporate actual system constraints: Floor your artificial information in precise system limitations and information. For instance, when testing a scheduling characteristic, use actual availability home windows and reserving guidelines.

- Confirm situation protection: Guarantee your generated information really triggers the eventualities you wish to take a look at. A question supposed to check “no matches discovered” ought to really return zero outcomes when run in opposition to your system.

- Begin easy, then add complexity: Start with easy take a look at circumstances earlier than including nuance. This helps isolate points and set up a baseline earlier than tackling edge circumstances.

This strategy isn’t simply theoretical—it’s been confirmed in manufacturing throughout dozens of corporations. What typically begins as a stopgap measure turns into a everlasting a part of the analysis infrastructure, even after actual person information turns into out there.

Let’s have a look at learn how to preserve belief in your analysis system as you scale.

Sustaining Belief In Evals Is Important

This can be a sample I’ve seen repeatedly: Groups construct analysis techniques, then progressively lose religion in them. Typically it’s as a result of the metrics don’t align with what they observe in manufacturing. Different instances, it’s as a result of the evaluations grow to be too complicated to interpret. Both manner, the end result is identical: The workforce reverts to creating selections primarily based on intestine feeling and anecdotal suggestions, undermining all the function of getting evaluations.

Sustaining belief in your analysis system is simply as essential as constructing it within the first place. Right here’s how essentially the most profitable groups strategy this problem.

Understanding Standards Drift

Probably the most insidious issues in AI analysis is “standards drift”—a phenomenon the place analysis standards evolve as you observe extra mannequin outputs. Of their paper “Who Validates the Validators? Aligning LLM-Assisted Analysis of LLM Outputs with Human Preferences,” Shankar et al. describe this phenomenon:

To grade outputs, individuals have to externalize and outline their analysis standards; nonetheless, the method of grading outputs helps them to outline that very standards.

This creates a paradox: You possibly can’t totally outline your analysis standards till you’ve seen a variety of outputs, however you want standards to judge these outputs within the first place. In different phrases, it’s not possible to fully decide analysis standards previous to human judging of LLM outputs.

I’ve noticed this firsthand when working with Phillip Carter at Honeycomb on the corporate’s Question Assistant characteristic. As we evaluated the AI’s skill to generate database queries, Phillip observed one thing attention-grabbing:

Seeing how the LLM breaks down its reasoning made me understand I wasn’t being constant about how I judged sure edge circumstances.

The method of reviewing AI outputs helped him articulate his personal analysis requirements extra clearly. This isn’t an indication of poor planning—it’s an inherent attribute of working with AI techniques that produce various and typically surprising outputs.

The groups that preserve belief of their analysis techniques embrace this actuality fairly than preventing it. They deal with analysis standards as dwelling paperwork that evolve alongside their understanding of the issue house. In addition they acknowledge that totally different stakeholders might need totally different (typically contradictory) standards, and so they work to reconcile these views fairly than imposing a single customary.

Creating Reliable Analysis Programs

So how do you construct analysis techniques that stay reliable regardless of standards drift? Listed here are the approaches I’ve discovered only:

1. Favor Binary Selections Over Arbitrary Scales

As I wrote in my LLM-as-a-Decide submit, binary selections present readability that extra complicated scales typically obscure. When confronted with a 1–5 scale, evaluators regularly wrestle with the distinction between a 3 and a 4, introducing inconsistency and subjectivity. What precisely distinguishes “considerably useful” from “useful”? These boundary circumstances devour disproportionate psychological vitality and create noise in your analysis information. And even when companies use a 1–5 scale, they inevitably ask the place to attract the road for “adequate” or to set off intervention, forcing a binary determination anyway.

In distinction, a binary move/fail forces evaluators to make a transparent judgment: Did this output obtain its function or not? This readability extends to measuring progress—a ten% enhance in passing outputs is straight away significant, whereas a 0.5-point enchancment on a 5-point scale requires interpretation.

I’ve discovered that groups who resist binary analysis typically achieve this as a result of they wish to seize nuance. However nuance isn’t misplaced—it’s simply moved to the qualitative critique that accompanies the judgment. The critique gives wealthy context about why one thing handed or failed and what particular features might be improved, whereas the binary determination creates actionable readability about whether or not enchancment is required in any respect.

2. Improve Binary Judgments With Detailed Critiques

Whereas binary selections present readability, they work finest when paired with detailed critiques that seize the nuance of why one thing handed or failed. This mix provides you the very best of each worlds: clear, actionable metrics and wealthy contextual understanding.

For instance, when evaluating a response that accurately solutions a person’s query however incorporates pointless data, an excellent critique may learn:

The AI efficiently offered the market evaluation requested (PASS), however included extreme element about neighborhood demographics that wasn’t related to the funding query. This makes the response longer than mandatory and doubtlessly distracting.

These critiques serve a number of capabilities past simply clarification. They drive area consultants to externalize implicit information—I’ve seen authorized consultants transfer from imprecise emotions that one thing “doesn’t sound correct” to articulating particular points with quotation codecs or reasoning patterns that may be systematically addressed.

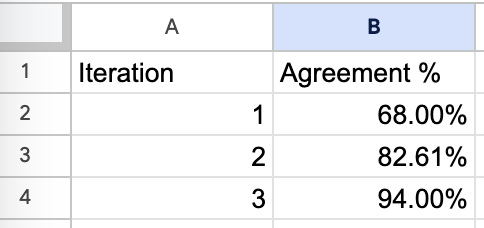

When included as few-shot examples in decide prompts, these critiques enhance the LLM’s skill to cause about complicated edge circumstances. I’ve discovered this strategy typically yields 15%–20% larger settlement charges between human and LLM evaluations in comparison with prompts with out instance critiques. The critiques additionally present glorious uncooked materials for producing high-quality artificial information, making a flywheel for enchancment.

3. Measure Alignment Between Automated Evals and Human Judgment

Should you’re utilizing LLMs to judge outputs (which is usually mandatory at scale), it’s essential to usually verify how properly these automated evaluations align with human judgment.

That is significantly essential given our pure tendency to over-trust AI techniques. As Shankar et al. observe in “Who Validates the Validators?,” the shortage of instruments to validate evaluator high quality is regarding.

Analysis exhibits individuals are likely to over-rely and over-trust AI techniques. As an illustration, in a single excessive profile incident, researchers from MIT posted a pre-print on arXiv claiming that GPT-4 may ace the MIT EECS examination. Inside hours, [the] work [was] debunked. . .citing issues arising from over-reliance on GPT-4 to grade itself.

This overtrust drawback extends past self-evaluation. Analysis has proven that LLMs might be biased by easy elements just like the ordering of choices in a set and even seemingly innocuous formatting modifications in prompts. With out rigorous human validation, these biases can silently undermine your analysis system.

When working with Honeycomb, we tracked settlement charges between our LLM-as-a-judge and Phillip’s evaluations:

It took three iterations to attain >90% settlement, however this funding paid off in a system the workforce may belief. With out this validation step, automated evaluations typically drift from human expectations over time, particularly because the distribution of inputs modifications. You possibly can learn extra about this right here.

Instruments like Eugene Yan’s AlignEval display this alignment course of fantastically. AlignEval gives a easy interface the place you add information, label examples with a binary “good” or “unhealthy,” after which consider LLM-based judges in opposition to these human judgments. What makes it efficient is the way it streamlines the workflow—you may rapidly see the place automated evaluations diverge out of your preferences, refine your standards primarily based on these insights, and measure enchancment over time. This strategy reinforces that alignment isn’t a one-time setup however an ongoing dialog between human judgment and automatic analysis.

Scaling With out Dropping Belief

As your AI system grows, you’ll inevitably face stress to scale back the human effort concerned in analysis. That is the place many groups go incorrect—they automate an excessive amount of, too rapidly, and lose the human connection that retains their evaluations grounded.

Probably the most profitable groups take a extra measured strategy:

- Begin with excessive human involvement: Within the early levels, have area consultants consider a big share of outputs.

- Research alignment patterns: Slightly than automating analysis, deal with understanding the place automated evaluations align with human judgment and the place they diverge. This helps you determine which kinds of circumstances want extra cautious human consideration.

- Use strategic sampling: Slightly than evaluating each output, use statistical methods to pattern outputs that present essentially the most data, significantly specializing in areas the place alignment is weakest.

- Preserve common calibration: Whilst you scale, proceed to check automated evaluations in opposition to human judgment usually, utilizing these comparisons to refine your understanding of when to belief automated evaluations.

Scaling analysis isn’t nearly lowering human effort—it’s about directing that effort the place it provides essentially the most worth. By focusing human consideration on essentially the most difficult or informative circumstances, you may preserve high quality whilst your system grows.

Now that we’ve coated learn how to preserve belief in your evaluations, let’s discuss a elementary shift in how you need to strategy AI improvement roadmaps.

Your AI Roadmap Ought to Rely Experiments, Not Options

Should you’ve labored in software program improvement, you’re aware of conventional roadmaps: a listing of options with goal supply dates. Groups decide to transport particular performance by particular deadlines, and success is measured by how carefully they hit these targets.

This strategy fails spectacularly with AI.

I’ve watched groups decide to roadmap goals like “Launch sentiment evaluation by Q2” or “Deploy agent-based buyer assist by finish of 12 months,” solely to find that the know-how merely isn’t prepared to satisfy their high quality bar. They both ship one thing subpar to hit the deadline or miss the deadline solely. Both manner, belief erodes.

The basic drawback is that conventional roadmaps assume we all know what’s doable. With typical software program, that’s typically true—given sufficient time and assets, you may construct most options reliably. With AI, particularly on the leading edge, you’re always testing the boundaries of what’s possible.

Experiments Versus Options

Bryan Bischof, former head of AI at Hex, launched me to what he calls a “functionality funnel” strategy to AI roadmaps. This technique reframes how we take into consideration AI improvement progress. As an alternative of defining success as transport a characteristic, the potential funnel breaks down AI efficiency into progressive ranges of utility. On the prime of the funnel is essentially the most fundamental performance: Can the system reply in any respect? On the backside is totally fixing the person’s job to be accomplished. Between these factors are numerous levels of accelerating usefulness.

For instance, in a question assistant, the potential funnel may seem like:

- Can generate syntactically legitimate queries (fundamental performance)

- Can generate queries that execute with out errors

- Can generate queries that return related outcomes

- Can generate queries that match person intent

- Can generate optimum queries that resolve the person’s drawback (full answer)

This strategy acknowledges that AI progress isn’t binary—it’s about progressively enhancing capabilities throughout a number of dimensions. It additionally gives a framework for measuring progress even if you haven’t reached the ultimate aim.

Probably the most profitable groups I’ve labored with construction their roadmaps round experiments fairly than options. As an alternative of committing to particular outcomes, they decide to a cadence of experimentation, studying, and iteration.

Eugene Yan, an utilized scientist at Amazon, shared how he approaches ML undertaking planning with management—a course of that, whereas initially developed for conventional machine studying, applies equally properly to trendy LLM improvement:

Right here’s a typical timeline. First, I take two weeks to do an information feasibility evaluation, i.e., “Do I’ve the appropriate information?”…Then I take a further month to do a technical feasibility evaluation, i.e., “Can AI resolve this?” After that, if it nonetheless works I’ll spend six weeks constructing a prototype we will A/B take a look at.

Whereas LLMs won’t require the identical form of characteristic engineering or mannequin coaching as conventional ML, the underlying precept stays the identical: time-box your exploration, set up clear determination factors, and deal with proving feasibility earlier than committing to full implementation. This strategy provides management confidence that assets received’t be wasted on open-ended exploration, whereas giving the workforce the liberty to be taught and adapt as they go.

The Basis: Analysis Infrastructure

The important thing to creating an experiment-based roadmap work is having sturdy analysis infrastructure. With out it, you’re simply guessing whether or not your experiments are working. With it, you may quickly iterate, take a look at hypotheses, and construct on successes.

I noticed this firsthand in the course of the early improvement of GitHub Copilot. What most individuals don’t understand is that the workforce invested closely in constructing refined offline analysis infrastructure. They created techniques that might take a look at code completions in opposition to a really giant corpus of repositories on GitHub, leveraging unit assessments that already existed in high-quality codebases as an automatic strategy to confirm completion correctness. This was an enormous engineering enterprise—they needed to construct techniques that might clone repositories at scale, arrange their environments, run their take a look at suites, and analyze the outcomes, all whereas dealing with the unbelievable variety of programming languages, frameworks, and testing approaches.

This wasn’t wasted time—it was the muse that accelerated every thing. With stable analysis in place, the workforce ran hundreds of experiments, rapidly recognized what labored, and will say with confidence “This transformation improved high quality by X%” as an alternative of counting on intestine emotions. Whereas the upfront funding in analysis feels gradual, it prevents infinite debates about whether or not modifications assist or damage and dramatically quickens innovation later.

Speaking This to Stakeholders

The problem, after all, is that executives typically need certainty. They wish to know when options will ship and what they’ll do. How do you bridge this hole?

The secret is to shift the dialog from outputs to outcomes. As an alternative of promising particular options by particular dates, decide to a course of that can maximize the probabilities of reaching the specified enterprise outcomes.

Eugene shared how he handles these conversations:

I attempt to reassure management with timeboxes. On the finish of three months, if it really works out, then we transfer it to manufacturing. At any step of the way in which, if it doesn’t work out, we pivot.

This strategy provides stakeholders clear determination factors whereas acknowledging the inherent uncertainty in AI improvement. It additionally helps handle expectations about timelines—as an alternative of promising a characteristic in six months, you’re promising a transparent understanding of whether or not that characteristic is possible in three months.

Bryan’s functionality funnel strategy gives one other highly effective communication instrument. It permits groups to indicate concrete progress by means of the funnel levels, even when the ultimate answer isn’t prepared. It additionally helps executives perceive the place issues are occurring and make knowledgeable selections about the place to take a position assets.

Construct a Tradition of Experimentation By way of Failure Sharing

Maybe essentially the most counterintuitive side of this strategy is the emphasis on studying from failures. In conventional software program improvement, failures are sometimes hidden or downplayed. In AI improvement, they’re the first supply of studying.

Eugene operationalizes this at his group by means of what he calls a “fifteen-five”—a weekly replace that takes fifteen minutes to jot down and 5 minutes to learn:

In my fifteen-fives, I doc my failures and my successes. Inside our workforce, we even have weekly “no-prep sharing periods” the place we talk about what we’ve been engaged on and what we’ve realized. After I do that, I’m going out of my strategy to share failures.

This observe normalizes failure as a part of the educational course of. It exhibits that even skilled practitioners encounter dead-ends, and it accelerates workforce studying by sharing these experiences overtly. And by celebrating the method of experimentation fairly than simply the outcomes, groups create an atmosphere the place individuals really feel secure taking dangers and studying from failures.

A Higher Manner Ahead

So what does an experiment-based roadmap seem like in observe? Right here’s a simplified instance from a content material moderation undertaking Eugene labored on:

I used to be requested to do content material moderation. I stated, “It’s unsure whether or not we’ll meet that aim. It’s unsure even when that aim is possible with our information, or what machine studying methods would work. However right here’s my experimentation roadmap. Listed here are the methods I’m gonna strive, and I’m gonna replace you at a two-week cadence.”

The roadmap didn’t promise particular options or capabilities. As an alternative, it dedicated to a scientific exploration of doable approaches, with common check-ins to evaluate progress and pivot if mandatory.

The outcomes had been telling:

For the primary two to a few months, nothing labored. . . .After which [a breakthrough] got here out. . . .Inside a month, that drawback was solved. So you may see that within the first quarter and even 4 months, it was going nowhere. . . .However then you may also see that impulsively, some new know-how…, some new paradigm, some new reframing comes alongside that simply [solves] 80% of [the problem].

This sample—lengthy intervals of obvious failure adopted by breakthroughs—is frequent in AI improvement. Conventional feature-based roadmaps would have killed the undertaking after months of “failure,” lacking the eventual breakthrough.

By specializing in experiments fairly than options, groups create house for these breakthroughs to emerge. In addition they construct the infrastructure and processes that make breakthroughs extra possible: information pipelines, analysis frameworks, and fast iteration cycles.

Probably the most profitable groups I’ve labored with begin by constructing analysis infrastructure earlier than committing to particular options. They create instruments that make iteration quicker and deal with processes that assist fast experimentation. This strategy may appear slower at first, nevertheless it dramatically accelerates improvement in the long term by enabling groups to be taught and adapt rapidly.

The important thing metric for AI roadmaps isn’t options shipped—it’s experiments run. The groups that win are these that may run extra experiments, be taught quicker, and iterate extra rapidly than their rivals. And the muse for this fast experimentation is all the time the identical: sturdy, trusted analysis infrastructure that provides everybody confidence within the outcomes.

By reframing your roadmap round experiments fairly than options, you create the circumstances for comparable breakthroughs in your individual group.

Conclusion

All through this submit, I’ve shared patterns I’ve noticed throughout dozens of AI implementations. Probably the most profitable groups aren’t those with essentially the most refined instruments or essentially the most superior fashions—they’re those that grasp the basics of measurement, iteration, and studying.

The core ideas are surprisingly easy:

- Have a look at your information. Nothing replaces the perception gained from inspecting actual examples. Error evaluation persistently reveals the highest-ROI enhancements.

- Construct easy instruments that take away friction. Customized information viewers that make it straightforward to look at AI outputs yield extra insights than complicated dashboards with generic metrics.

- Empower area consultants. The individuals who perceive your area finest are sometimes those who can most successfully enhance your AI, no matter their technical background.

- Use artificial information strategically. You don’t want actual customers to start out testing and enhancing your AI. Thoughtfully generated artificial information can bootstrap your analysis course of.

- Preserve belief in your evaluations. Binary judgments with detailed critiques create readability whereas preserving nuance. Common alignment checks guarantee automated evaluations stay reliable.

- Construction roadmaps round experiments, not options. Decide to a cadence of experimentation and studying fairly than particular outcomes by particular dates.

These ideas apply no matter your area, workforce dimension, or technical stack. They’ve labored for corporations starting from early-stage startups to tech giants, throughout use circumstances from buyer assist to code era.

Sources for Going Deeper

Should you’d wish to discover these matters additional, listed below are some assets that may assist:

- My weblog for extra content material on AI analysis and enchancment. My different posts dive into extra technical element on matters reminiscent of setting up efficient LLM judges, implementing analysis techniques, and different features of AI improvement.1 Additionally take a look at the blogs of Shreya Shankar and Eugene Yan, who’re additionally nice sources of knowledge on these matters.

- A course I’m instructing, Quickly Enhance AI Merchandise with Evals, with Shreya Shankar. It gives hands-on expertise with methods reminiscent of error evaluation, artificial information era, and constructing reliable analysis techniques, and contains sensible workouts and customized instruction by means of workplace hours.

- Should you’re in search of hands-on steering particular to your group’s wants, you may be taught extra about working with me at Parlance Labs.

Footnotes

- I write extra broadly about machine studying, AI, and software program improvement. Some posts that broaden on these matters embrace “Your AI Product Wants Evals,” “Making a LLM-as-a-Decide That Drives Enterprise Outcomes,” and “What We’ve Realized from a Yr of Constructing with LLMs.” You possibly can see all my posts at hamel.dev.