AI reasoning, inference and networking might be high of thoughts for attendees of subsequent week’s Scorching Chips convention.

A key discussion board for processor and system architects from business and academia, Scorching Chips — operating Aug. 24-26 at Stanford College — showcases the most recent improvements poised to advance AI factories and drive income for the trillion-dollar information heart computing market.

On the convention, NVIDIA will be part of business leaders together with Google and Microsoft in a “tutorial” session — happening on Sunday, Aug. 24 — that discusses designing rack-scale structure for information facilities.

As well as, NVIDIA consultants will current at 4 periods and one tutorial detailing how:

- NVIDIA networking, together with the NVIDIA ConnectX-8 SuperNIC, delivers AI reasoning at rack- and data-center scale. (That includes Idan Burstein, principal architect of community adapters and systems-on-a-chip at NVIDIA)

- Neural rendering developments and big leaps in inference — powered by the NVIDIA Blackwell structure, together with the NVIDIA GeForce RTX 5090 GPU — present next-level graphics and simulation capabilities. (That includes Marc Blackstein, senior director of structure at NVIDIA)

- Co-packaged optics (CPO) switches with built-in silicon photonics — constructed with light-speed fiber somewhat than copper wiring to ship data faster and utilizing much less energy — allow environment friendly, high-performance, gigawatt-scale AI factories. The speak can even spotlight NVIDIA Spectrum-XGS Ethernet, a brand new scale-across expertise for unifying distributed information facilities into AI super-factories. (That includes Gilad Shainer, senior vice chairman of networking at NVIDIA)

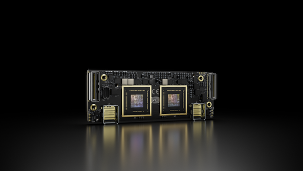

- The NVIDIA GB10 Superchip serves because the engine inside the NVIDIA DGX Spark desktop supercomputer. (That includes Andi Skende, senior distinguished engineer at NVIDIA)

It’s all a part of how NVIDIA’s newest applied sciences are accelerating inference to drive AI innovation all over the place, at each scale.

NVIDIA Networking Fosters AI Innovation at Scale

AI reasoning — when synthetic intelligence methods can analyze and resolve advanced issues by a number of AI inference passes — requires rack-scale efficiency to ship optimum person experiences effectively.

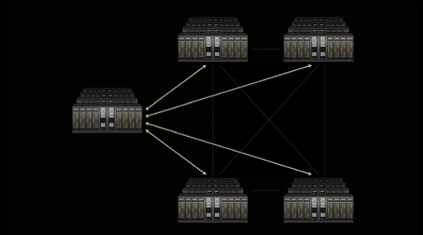

In information facilities powering as we speak’s AI workloads, networking acts because the central nervous system, connecting all of the parts — servers, storage units and different {hardware} — right into a single, cohesive, highly effective computing unit.

Burstein’s Scorching Chips session will dive into how NVIDIA networking applied sciences — significantly NVIDIA ConnectX-8 SuperNICs — allow high-speed, low-latency, multi-GPU communication to ship market-leading AI reasoning efficiency at scale.

As a part of the NVIDIA networking platform, NVIDIA NVLink, NVLink Change and NVLink Fusion ship scale-up connectivity — linking GPUs and compute components inside and throughout servers for extremely low-latency, high-bandwidth information change.

NVIDIA Spectrum-X Ethernet gives the scale-out material to attach complete clusters, quickly streaming large datasets into AI fashions and orchestrating GPU-to-GPU communication throughout the information heart. Spectrum-XGS Ethernet scale-across expertise extends the acute efficiency and scale of Spectrum-X Ethernet to interconnect a number of, distributed information facilities to kind AI super-factories able to giga-scale intelligence.

On the coronary heart of Spectrum-X Ethernet, CPO switches push the boundaries of efficiency and effectivity for AI infrastructure at scale, and might be lined intimately by Shainer in his speak.

NVIDIA GB200 NVL72 — an exascale laptop in a single rack — options 36 NVIDIA GB200 Superchips, every containing two NVIDIA B200 GPUs and an NVIDIA Grace CPU, interconnected by the biggest NVLink area ever provided, with NVLink Change offering 130 terabytes per second of low-latency GPU communications for AI and high-performance computing workloads.

Constructed with the NVIDIA Blackwell structure, GB200 NVL72 methods ship large leaps in reasoning inference efficiency.

NVIDIA Blackwell and CUDA Carry AI to Hundreds of thousands of Builders

The NVIDIA GeForce RTX 5090 GPU — additionally powered by Blackwell and to be lined in Blackstein’s speak — doubles efficiency in as we speak’s video games with NVIDIA DLSS 4 expertise.

It will possibly additionally add neural rendering options for video games to ship as much as 10x efficiency, 10x footprint amplification and a 10x discount in design cycles, serving to improve realism in laptop graphics and simulation. This provides easy, responsive visible experiences at low power consumption and improves the lifelike simulation of characters and results.

NVIDIA CUDA, the world’s most generally accessible computing infrastructure, lets customers deploy and run AI fashions utilizing NVIDIA Blackwell wherever.

Tons of of tens of millions of GPUs run CUDA throughout the globe, from NVIDIA GB200 NVL72 rack-scale methods to GeForce RTX– and NVIDIA RTX PRO-powered PCs and workstations, with NVIDIA DGX Spark powered by NVIDIA GB10 — mentioned in Skende’s session — coming quickly.

From Algorithms to AI Supercomputers — Optimized for LLMs

Delivering highly effective efficiency and capabilities in a compact package deal, DGX Spark lets builders, researchers, information scientists and college students push the boundaries of generative AI proper at their desktops, and speed up workloads throughout industries.

As a part of the NVIDIA Blackwell platform, DGX Spark brings assist for NVFP4, a low-precision numerical format to allow environment friendly agentic AI inference, significantly of enormous language fashions (LLMs). Be taught extra about NVFP4 on this NVIDIA Technical Weblog.

Open-Supply Collaborations Propel Inference Innovation

NVIDIA accelerates a number of open-source libraries and frameworks to speed up and optimize AI workloads for LLMs and distributed inference. These embody NVIDIA TensorRT-LLM, NVIDIA Dynamo, TileIR, Cutlass, the NVIDIA Collective Communication Library and NIX — that are built-in into tens of millions of workflows.

Permitting builders to construct with their framework of selection, NVIDIA has collaborated with high open framework suppliers to supply mannequin optimizations for FlashInfer, PyTorch, SGLang, vLLM and others.

Plus, NVIDIA NIM microservices can be found for standard open fashions like OpenAI’s gpt-oss and Llama 4, making it simple for builders to function managed utility programming interfaces with the flexibleness and safety of self-hosting fashions on their most popular infrastructure.

Be taught extra concerning the newest developments in inference and accelerated computing by becoming a member of NVIDIA at Scorching Chips.